Sep 4, 2017 Comments Off on Enhanced Coinduction Main Page

Enhanced Coinduction Main Page

NII Shonan Meeting on

Enhanced Coinduction

Shonan village center, Kanagawa, Japan

12 – 17 Nov, 2017

Travel Information

Information provided by Shonan village center

Objective

The proof and definition principle of induction is standard in mathematics and computer science. Recently, it is becoming increasingly clear that coinduction, which is formally dual to induction, provides a foundation for the behaviour of many kinds of structures in computer science, including: various calculi in process algebra; infinite data structures in functional programming and theorem provers; automata and formal languages; dynamical systems; behavioural and modal logics; and many more.

Since the early days of coinduction, several extensions and generalisations — typically referred to as “enhancements” — of coinduction have been developed. These extended techniques involve generalisations of the notion of bisimulation, such as bisimulation up to equivalence, bisimulation up to congruence, and bisimulation up to bisimilarity. These techniques all have in common that they strongly enhance the applicability of coinduction by allowing the bisimulation proof method to be based on much smaller bisimulation relations.

Since the arrival of coalgebra in the arena of the foundations of computer science, coinduction has been captured in terms of finality and coalgebraic bisimulation. These coalgebraic generalization also apply to these enhanced coinductive techniques. This has led to a very general and widely applicable formulation of up-to techniques, with very recent, new applications in, for instance, automata theory, the theory of infinite streams (Moessner’s theorem), and theorem proving.

In the light of these recent new and exciting developments in both the theory and applications of coinduction, this workshop seeks to bring together world leading experts from the relevant disciplines in computer science, notably

- process algebra

- coalgebra

- automata theory

- functional programming

- logic and type theory

The aims of the workshop can then be summarised as follows:

- advancing the understanding of coinduction and its enhancements, both in terms of lattice theory and coalgebra;

- discussing recent applications of enhanced coinduction and looking for new areas and problems where applications can be found;

- all of this through a cross-fertilisation between the different disciplines mentioned above.

Program

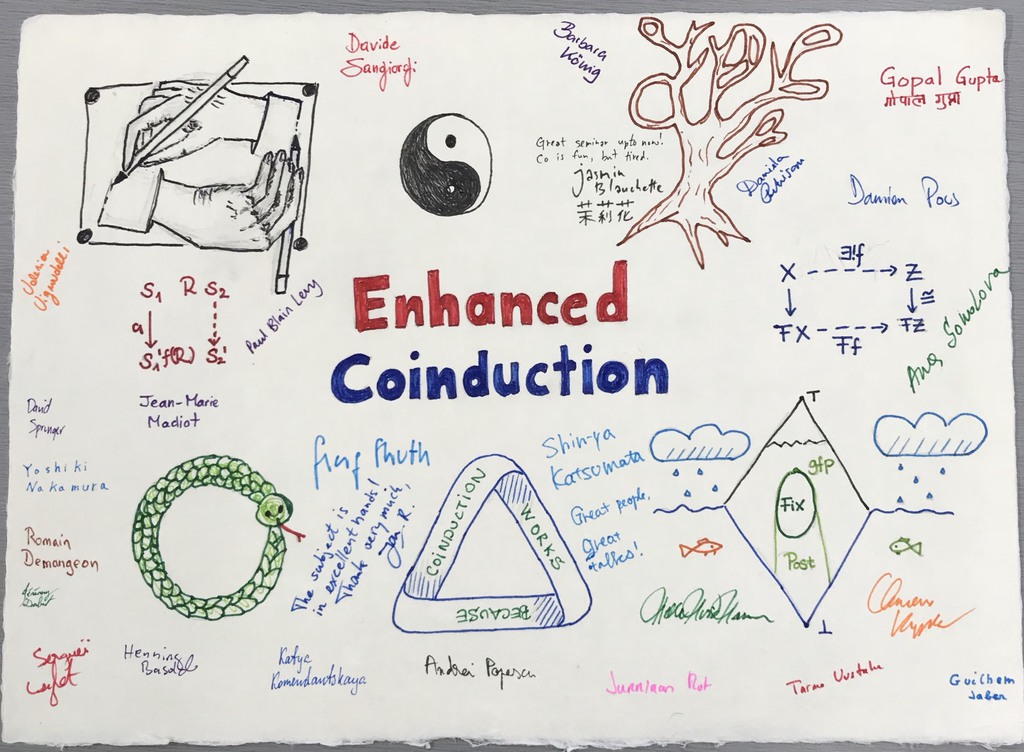

Participants

Henning Basold (CNRS – ENS Lyon)

Jasmin Blanchette (Vrije Universiteit Amsterdam)

Romain Demangeon (UPMC Paris)

Jérémy Dubut (National Institute of Informatics)

Adrien Durier (ENS Lyon)

Gopal Gupta (The University of Texas at Dallas)

Helle Hansen (Delft U)

Ichiro Hasuo (National Institute of Informatics)

Guilhem Jaber (ENS Lyon)

Shin-ya Katsumata (National Institute of Informatics)

Barbara Koenig (Duisburg Essen U)

Ekaterina Komendantskaya (University of Dundee)

Clemens Kupke (University of Strathclyde)

Serguei Lenglet (U Lorraine)

Paul-Blain Levy (U Birmingham)

Jean-Marie Madiot (Princeton U)

Yoshiki Nakamura (Tokyo Institute of Technology)

Daniela Petrisan (Université Paris Diderot)

Andrei Popescu (MU London)

Damien Pous (CNRS – ENS Lyon)

Jurriaan Rot (Radboud University Nijmegen)

Jan Rutten (CWI and Radboud University Nijmegen)

Davide Sangiorgi (U Bologna)

Ana Sokolova (University of Salzburg)

David Sprunger (National Institute of Informatics)

Georg Struth (U Sheffield)

Tarmo Uustalu (Reykjavik U)

Valeria Vignudelli (CNRS – ENS Lyon)

Talk Abstracts

Henning Basold A Recursive Logic for the Equivalence of Inductive-Coinductive Programs

In this talk, I will present a logic the behavioural equivalence of inductive-coinductive programs. Crucially, the logic’s proof system features no explicit induction nor coinduction principle. Instead, equivalences can be proven in that proof system by recursive proofs, that is, by proofs that may refer to themselves. However, we need to restrict the use of such references, since arbitrary self-references would temper with the soundness of the proof system. In the presented system, this will be achieved by using the so-called later modality. A crucial difference to cyclic proof systems is that the later modality gives rise to a local correctness condition, that is, every application of a proof rule produces only a correct proof tree with valid back-references. This enables simple proof checking and allows for a compositional soundness proof, as I will demonstrate.

Jasmin Blanchette Foundational Codatatypes for Higher-Order Logic

I will describe a line of work that started in 2011 towards enriching Isabelle/HOL’s language with coinductive datatypes and with a more expressive notion of inductive datatype than previously supported by any system based on higher-order logic. These codatatypes are complemented by definitional principles for corecursive functions and reasoning principles for coinduction. In contrast with other systems offering codatatypes, no additional axioms or logic extensions are necessary with our approach.

Romain Demangeon Computational Complexity for Processes

We define a notion of computational complexity tailored for processes, seen as services. A notion of causality (a la Degano-Priami) allows us to count transitions which are caused by a same initial request received from the outside, and compare it with the content of the request.

We present a static analysis (a type system and a set of syntactic constraints) to guarantee that a process is polynomial w.r.t. our definition of complexity. The type system is built upon the Deng-Sangiorgi type system for termination, adapting a well known Implicit Computational Complexity result (Bellantoni-Cook). Constraints ensure no integer received from the outside interfer with the controlled computation flow

Because our analysis is syntactic — we check if a process can be type-checked and if it abides to flow constraints, our results (soundness and completeness) are not stable w.r.t. the behaviour of processes. For instance, P can be rejected while Q, bisimilar to P, is accepted. An interesting open problem is to study completeness up-to a well-chosen equivalence: “Any polynomial process is equivalent to an accepted process”.

Jérémy Dubut Bisimilarity via open morphisms and bisimilarity of diagrams

I will describe a line of work from Joyal, Nielsen and Winskel of a(nother) theory of bisimilarity through the lens of category theory, using morphisms having lifting properties with respect to “paths”. I will review some examples of bisimilarities modeled in this setting, and describe how this abstract bisimilarity can be somehow related to more concrete definitions using bisimulation relations. I will then focus on one example, the case of bisimilarity of diagrams, namely functors from a small category to a specified category of values. This bisimilarity was introduced recently in a line of work on directed algebraic topology to describe the evolution of the data of a system with time. I will show you that this case is equivalent to the existence of a nice notion of bisimulations, which allows us to translate the problem of bisimilarity, to a problem of isomorphisms in the category of values, which is useful for (un)decidability. I will finally show you how to translate the general setting of Joyal et al, in the case where the category of paths is small, into diagrams, and I will describe the relations between the different bisimilarities involved.

Adrien Durier Divergence and unique solution of equations

I consider proof techniques for bisimilarity based on unique solution of equations. Essentially the technique says that an equation (or a system of equations) whose infinite unfolding never produces a divergence has the unique-solution property. I present the result in the operational setting of CCS and for weak bisimilarity, and then discuss extensions and variants. In contrast with the method based on contractions, here no auxiliary relations are needed.

Gopal Gupta Coinductive Logic Programming

Coinduction is a powerful technique for reasoning about unfounded sets, unbounded structures, infinite automata, and interactive computations. In this talk I will show how co-induction can be elegantly incorporated into logic programming to obtain the co-inductive logic programming (co-LP) paradigm. I will also discuss how co-LP can be elegantly used for sophisticated applications as well as discuss several open problems.

Gopal Gupta Proof Theoretic Foundations of Normal Logic Programs

We show that the myriad semantics of logic programming extended with negation can be comprehensively understood by casting them purely in terms of induction and coinduction. We show how a uniform proof-theoretic semantics can be given for various semantics of logic programming extended with negation. We also show how operational semantics (interpreter) can be developed for these semantics in a systematic way using SLD and co-SLD resolution. We describe the application of our insights to realizing the s(ASP) system that implements the stable model semantics in a query-driven manner without requiring any grounding of the program. The s(ASP) system—whose foundations lie in realizing coinductive behavior via coSLD resolution—-is the first ever predicate answer set programming system. An implementation of the s(ASP) system is publicly available and has been used to develop a number of novel applications. I will also discuss open problems that arise in this endeavor.

Helle Hansen A (co)algebraic perspective on long term values in Markov Decision Processes

Markov Decision Processes (MDPs) are probabilistic models used in planning under uncertainty. Given an MDP and a policy (i.e. a plan of action), the long term value is the long term expected reward when following the given policy. The aim is to find an optimal policy, i.e. a policy that maximises the long term value. The mathematical theory of MDPs is well understood classically. We take a fresh look on long term values and their defining Bellman equations using coalgebra and algebra. This is ongoing, joint work with Frank Feys and Larry Moss.

Ichiro Hasuo Least and Alternating Fixed-Point Specifications in Coalgebraic System Modeing

Final coalgebras as “categorical greatest fixed points” play a central role in the theory of coalgebras. Somewhat analogously, most proof methods studied therein have focused on greatest fixed-point properties like safety and bisimilarity. In this talk we outline our recent attempts to go beyond, that is, to accommodate other fixed points (such as least and alternating ones) in coalgebraic modeling. The talk will be based on the following papers: [Hasuo, Cirstea & Shimizu, POPL’16], [Urabe, Shimizu & Hasuo, CONCUR’16], [Urabe, Hara & Hasuo, LICS’17].

(Joint work with: Natsuki Urabe, Shunsuke Shimizu, Corina Cirstea and Masaki Hara)

Guilhem Jaber An Overview of Operational Techniques for Contextual Equivalence of Higher-order Programming Languages

These past thirty years have seen the development of many techniques to prove contextual equivalence of programs, starting from three seminal works:

- Applicative Bisimulations by Abramsky, that has led to Normal Form, Logical, and Environmental Bisimulations;

- Operational Logical Relations by Pitts & Stark, that has led to Step-Indexed Kripke Logical Relations;

- Game Semantics, by Abramsky, Jagadeesan & Malacaria, and Hyland & Ong, that has led to Algorithmic Game Semantics.

In this talk, we will present the main ideas behind these techniques, focusing on higher-order languages with local states. We will see how an operational presentation of game semantics, where the denotation of programs is computed by a labelled transition system, provides a framework to relate these different techniques.

Shin-ya Katsumata A Categorical Approach to Howe’s Method

Howe’s method is a method to prove that the applicative (bi)similarity is a (pre)congruence. I will talk about my ongoing work on the categorical formulation of its proof. The formulation is built upon an abstract treatment of the construction of precongruence candidate and the operational semantics of the language.

Barbara Koenig Up-To Techniques for Weighted Systems

We show how up-to techniques for (bi-)similarity can be used in the setting of weighted systems. The problems we consider are language equivalence, language inclusion and the threshold problem (also known as universality problem) for weighted automata.

We build a bisimulation relation on the fly and work up-to congruence and up-to similarity. This requires to determine whether a pair of vectors (over a semiring) is in the congruence closure of a given relation of vectors. This problem is considered for rings and l-monoids, for the latter we provide a rewriting algorithm and show its confluence and termination.

We then explain how to apply these up-to techniques to weighted automata and provide runtime results.

(Joint work with Filippo Bonchi & Sebastian Küpper)

Ekaterina Komendantskaya Coinduction for Uniform Proofs

In the mid 90s, Dale Miller, Frank Pfenning, Gopalan Nadathur and Co. proposed the notion of “Uniform Proof”, in an attempt to give a proof theoretic (sequent calculus) account to proof search engines (such as Prolog) that were popular at that time. Since then, Uniform Proofs gave rise to many applications of independent interest — e.g. Lambda Prolog and more recently Abella, to name a few.

Several attempts have been made to introduce coinduction in Uniform proofs, by means of introducing coinductive types: Bedwyr and Abella are examples. However, these coinductive systems, although being of independent value, were no longer designed or suited to serve as a proof-theoretic foundations for infinite/nonterminating proof search in logic programming style.

In this talk, I will show a new variant of coinductive uniform proofs, which gives a proof theoretic semantics to nonterminating proofs in Horn clause logic.

Clemens Kupke Learning bisimulation quotients

A key result in computational learning theory is Dana Angluin’s L* algorithm that describes how to learn a regular language by asking membership and equivalence queries to a teacher. I am going to present a coalgebraic generalisation of this algorithm that allows to learn the bisimulation quotient of a given (pointed) coalgebra. The complexity of our algorithm is essentially the same as L*’s when measured in the number of required queries – it remains to be seen, however, whether or not the implementation of a “good” teacher is feasible. Central to our algorithm are ideas from modal logic.

Reference: Simone Barlocco and Clemens Kupke. Angluin Learning via Logic (accepted for publication at LFCS 2018)

Serguei Lenglet Inception up to context

Up-to technique simplify equivalence proofs, as they imply bisimilarity with less restrictive conditions to check. In particular, bisimulation up to context allows us to equate terms put in a common context. We present an enhanced bisimulation up to context, particularly useful for calculi manipulating contexts in their semantics, such as calculi with control operators. To prove it sound, we extend Madiot et al. framework for soundness proofs for up-to techniques.

Paul-Blain Levy Trace semantics in a final coalgebra (Work in progress)

It is well known that deterministic I/O processes, up to trace equivalence, or equivalently up to bisimilarity, form a final coalgebra for a polynomial functor on Set.

We show that nondeterministic or probabilistic I/O processes, up to trace equivalence, form a final coalgebra for a polynomial functor on semilattices (for nondeterminism) or on convex spaces (for probability).

Jean-Marie Madiot Coinductive methods in compiler correctness

We explore coinductive aspects of compiler correctness, starting from those of the certified compiler CompCert. Sadly, compilation is not a bisimulation: indeed, in order to allow optimisations, unsafe programs can be compiled to safe programs, but the other way around is rather undesirable. At the same time, compilers are able to choose and hence reduce non-determinism, so in the end we have refinements in both directions. Fortunately, partial determinism is helpful with going from one direction to the other. In this talk, I explain how partial determinism interacts with simulations and well-founded relations, and will show details and complications of simulation enhancements.

Yoshiki Nakamura Partial Derivatives for Relation Models

Brzozowski’s derivatives and Antimirov’ partial derivatives are techniques for constructing finite automata in formal language theory. I will introduce a definition of partial derivatives for relation models. I will also introduce algorithms for equivalence problems or non-emptiness (satisfiability) problems induced by it.

Daniela Petrisan Automata as functors: simulations and up-to techniques

In recent work [1] we proposed modelling automata as functors from an “input” category specifying the structure of the input processed by the automata (e.g. finite words, finite or infinite trees) to an “output” category specifying the output values (e.g. Boolean values, words, probabilities). In this talk, I will discuss on-going work about how one can fit simulations and up-to techniques in this framework.

[1] Thomas Colcombet and Daniela Petrisan. Automata minimization: a functorial approach, CALCO 2017

Andrei Popescu Friendly corecursion and coinduction in Isabelle/HOL

I will discuss the recently developed Isabelle/HOL infrastructure for defining functions by corecurion and proving facts about them by coinduction. I will show how the corecursion and coinduction schemes associated to a codatatype evolve in tandem by learning of new “friendly” operators from the user.

(Joint work with Jasmin Blanchette, Dmitriy Traytel, Andreas Lochbihler and others.)

Damien Pous Companion of a monotone function

We revisit coinductive proof principles from a lattice theoretic point of view.

By associating to any monotone function a function which we call the companion, we give a new presentation of 1/ Knaster-Tarski’s seminal result, 2/ the theory of enhancements of the coinductive proof method (up-to techniques), and 3/ parameteriwed coinduction, as proposed by Hur et al.

Jurriaan Rot On the companion of a functor

In the lattice-theoretic formulation of coinduction and up-to techniques, the companion appears as the largest valid technique for a given coinductive predicate. In this talk I will move from lattice-theoretic coinduction to universal coalgebra and introduce the companion of a functor. This companion gives rise to a to a construction of causal operations (algebras) on final coalgebras such as streams and languages, to new coiteration principles and, ultimately, to valid enhancements of the coinductive proof method.

Davide Sangiorgi Equations, contractions, and unique solutions

I present a proof method for bisimilarity based on unique solution of special forms of inequations called contractions, and inspired by Milner’s theorem on unique solution of equations. The method is as powerful as the bisimulation proof method and its `up-to context’ enhancements. The definition of contraction can be transferred onto other behavioural equivalences, possibly contextual and non-coinductive.

Ana Sokolova The power of convex algebras

Probabilistic automata (PA) combine probability and nondeterminism. They can be given different semantics, like strong bisimilarity, convex bisimilarity, or (more recently) distribution bisimilarity. The latter is based on the view of PA as transformers of probability distributions, also called belief states, and promotes distributions to first-class citizens.

We give a coalgebraic account of the latter semantics, and explain the genesis of the belief- state transformer from a PA. To do so, we make explicit the convex algebraic structure present in PA and identify belief-state transformers as transition systems with state space that carries a convex algebra. As a consequence of our abstract approach, we can give a sound proof technique which we call bisimulation up-to convex hull.

David Sprunger Up-to techniques in metric spaces

I will discuss ongoing work regarding metric space coalgebras, their behavioral metrics and up-to techniques.

Georg Struth Generalised Kripke Semantics for Concurrent Quantales

I present ongoing work on a new technique for constructing models of concurrent quantales (and concurrent Kleene algebras). It is inspired by modal correspondence theory for substructural logics. Frame conditions are given by ternary relations. The operations of concurrent quantales arise as binary modalities — in fact convolution operations — over quantale-valued functions, parametrised by the ternary frames. The main result is a correspondence theorem between concurrent quantales and frame conditions. A first example constructs concurrent quantales and Kleene algebras of weighted shuffle languages from word-level concatenation and shuffle operations. The second one builds concurrent quantales of graphs (or graph types) by lifting from the operations of complete join and disjoint union on individual graphs. The concurrent quantales of pomset languages arise as a special case. Similar constructions in separation logic or interval temporal logics illustrate the scope of the approach, if time permits.

Joint work with James Cranch, Simon Doherty, Brijesh Dongol, Ian Hayes

Tarmo Uustalu A coalgebraic view of bar recursion and bar induction

We reformulate the bar recursion and induction principles in terms of recursive and wellfounded coalgebras. Bar induction was originally proposed by Brouwer as an axiom to recover certain classically valid theorems in a constructive setting. It is a form of induction on non-wellfounded trees satisfying certain properties. Bar recursion, introduced later by Spector, is the corresponding function definition principle.

We give a generalization of these principles, by introducing the notion of barred coalgebra: a process with a branching behaviour given by a functor, such that all possible computations terminate.

Coalgebraic bar recursion is the statement that every barred coalgebra is recursive; a recursive coalgebra is one that allows definition of functions by a coalgebra-to-algebra morphism. One application of this principle is tabulation of continuous functions: Ghani, Hancock and Pattinson defined a type of wellfounded trees that represent continuous functions on streams. Bar recursion allows us to prove that every stably continuous function can be tabulated to such a tree, where by stability we mean that the modulus of continuity is its own modulus.

Coalgebraic bar induction states that every barred coalgebra is wellfounded; a wellfounded coalgebra is one that admits proof by a form of induction.

This is joint work with Venanzio Capretta, University of Nottingham, published in Proc. of FoSSaCS 2016. Time permitting, I might also discuss our related ongoing work.

Valeria Vignudelli Coinductive proof techniques for probabilistic trace equivalences

Several different definitions of trace equivalences and preorders for probabilistic automata, i.e., labelled transition systems combining both probabilistic and nondeterministic choices, have been proposed. We show that a class of probabilistic trace equivalences, in particular those inspired by definitions of may and must probabilistic testing equivalences, can be characterized coinductively. This is carried out by defining (bi)simulations on a determinized version of the probabilistic automaton, via a powerset construction. We discuss how these results can be studied in a coalgebraic framework, and how they allow us to derive proof techniques for probabilistic trace equivalences.

Organizers

Shin-ya Katsumata (National Institute of Informatics)

Damien Pous (CNRS – ENS Lyon)

Jan Rutten (CWI and Radboud University Nijmegen)